Theodore Lowe, Ap #867-859 Sit Rd, Azusa New York

Theodore Lowe, Ap #867-859 Sit Rd, Azusa New York

Vibe coding is a legitimate prototyping tool. But the same properties that make LLMs fast at generating code, stateless context, completion bias, local optimization, make them structurally incapable of producing production grade systems.

This post identifies the 7 failure points we see in every vibe coded codebase and provides a triage framework for founders who need to stabilize before they scale.

KEY TAKEAWAYS

- The prompt regression loop traps you in “fix this, break that” cycles because the AI has no persistent memory of your architecture.

- Authentication gaps are the highest risk item on this list, the AI builds the login form but doesn’t build the security infrastructure around it.

- Clean file structures don’t mean clean architecture. Most vibe coded codebases look modular on the surface and are deeply coupled underneath.

- Database performance collapses at scale because the schema was designed to make a demo work, not to survive real traffic.

- Dependency sprawl creates a compounding tax on every future change and quietly expands your attack surface.

- Zero test coverage means you have no automated way to verify that things that used to work still do.

- Missing deployment infrastructure means every release is one mistake away from a catastrophe nobody can roll back.

Your app shipped in 10 days. You described what you wanted, the AI built it, and users showed up. That part worked exactly as advertised.

Here’s the part that didn’t. Somewhere around week six, your auth started doing something weird when two people logged in at the same time. Your database, which honestly was fine when it was just you and some test accounts, hit a wall at around 5,000 rows. You asked the AI to fix a payments bug and it broke two features nobody had touched. You’re now in a cycle where you spend more time debugging than building, and the AI keeps proposing full rewrites of code it wrote three days ago. (Sound familiar? You’re in good company. The r/vibecoding community on Reddit even has a name for this, “vibe coding hell”, and the threads go long.)

Here’s what I want you to understand, you didn’t screw up. You made a speed decision that was correct at the time. Vibe coding is genuinely great at what it does, which is getting a working product in front of users fast. Vibe coding for startups has become a real launch strategy. A quarter of YC’s Winter 2025 batch had codebases that were 95% AI generated.

The problem is that nobody explains where the prototyping boundary ends and production engineering begins. You crossed that boundary, probably months ago and now you’re living with the consequences.

This post is a diagnostic. Here are the 7 specific ways this breaks, here’s how to tell which ones apply to you, and here’s what the fix actually looks like.

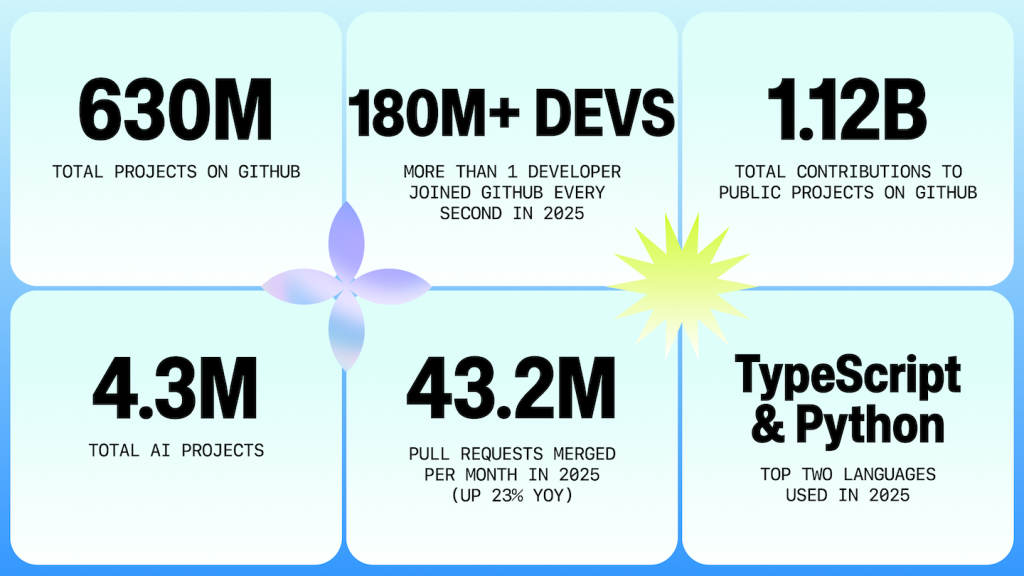

One data point before we get into it, CodeRabbit ran an analysis of AI coauthored pull requests and found 1.7x more major issues and 2.74x higher security vulnerability rates compared to human written code. This isn’t a culture war take on AI. It’s a measurement. And the failure modes are predictable enough that we see the same ones every time we open a vibe coded codebase, which, at this point, is a lot of codebases.

Let’s walk through them.

I want to lay out three root causes before we get to the specific failures, because once you see these, the rest of the post will make a lot more sense. Everything that goes wrong in a vibe coded codebase traces back to one of these. These are the vibe coding risks that ship with the tool itself, baked into how LLMs generate code.

Stateless generation – Every prompt you send is a fresh context window. The LLM has no persistent memory of the architectural decisions you made in previous sessions, it doesn’t know that Tuesday’s database schema contradicts Thursday’s API endpoint. So each individual output works fine on its own. The function runs, the query returns data, the component renders. But the pieces don’t fit together because nobody (not you, not the AI) is holding the floor plan. The AI is building one room at a time and assuming someone else is keeping track of the hallways.

Completion bias – LLMs are optimized to give you something that works right now. They’ll generate code that passes the immediate test, and that code will look great in the moment. The issue is that each of those quick wins constrains what’s possible next, in ways you can’t see from the outside. After a few months of this, you’ve accumulated dozens of local optimizations that have hardened into your architecture, and it’s the wrong architecture, because nobody designed it. It just happened.

Implicit dependency accumulation – This one’s subtle. The AI will couple components that should be decoupled, shared state leaking across modules, global variables, a single database table doing triple duty for unrelated features. Your file structure will look clean. You’ll have folders and component names that suggest everything is properly separated. Under the hood, everything depends on everything, and you’ll find out the hard way when you try to change one thing and three things break.

These three dynamics are just how LLMs generate code, for now. They’re features of the tool, in the same way that a hammer is great at nails and bad at screws. Understanding this makes the next section a lot less surprising.

“The AI is building one room at a time and assuming someone else is keeping track of the hallways.”

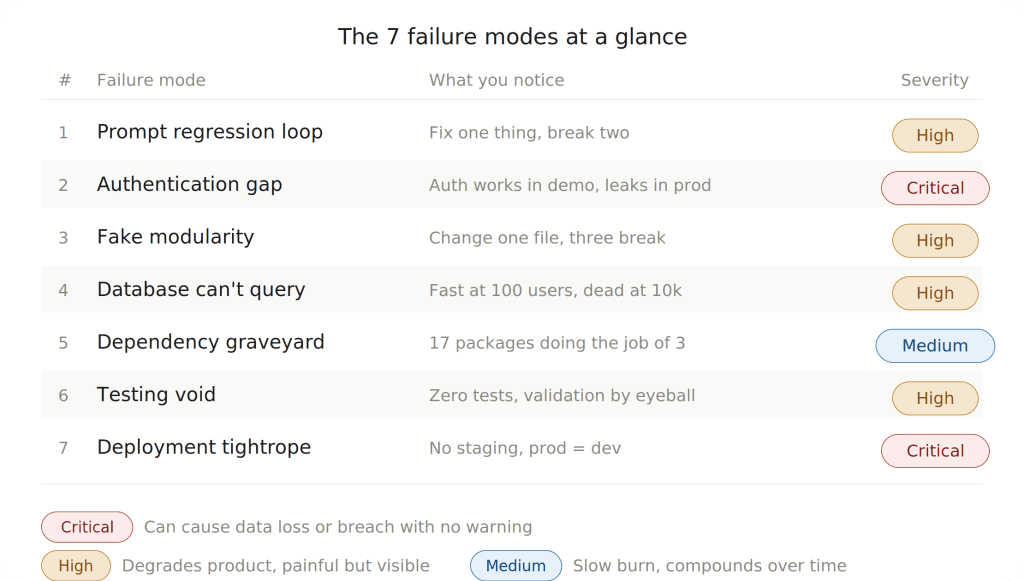

Every vibe coded codebase we’ve audited has some combination of these. I’ve ordered them by how founders typically notice them, the ones that hurt first are up top. Severity is marked separately, and you should pay close attention to the two Critical ones even if you haven’t felt them yet, because those are the ones that don’t give you warning before they blow up.

Severity: High

You fix one thing, two things break. You feed the error back to the AI, it fixes that, and something else goes sideways. You’ve been in this cycle for a while now, and each round seems to make the codebase a little worse.

What’s going on under the hood is that there’s no canonical source of truth in your code. Each prompt is overwriting or contradicting context from previous prompts, and the AI can’t refactor its way out because refactoring requires understanding the full architecture. Nobody has that understanding, you don’t, the AI doesn’t, because the codebase was never designed. It accreted, prompt after prompt.

This is the one that burns the most founder hours. In one detailed Hacker News account, a founder described a Bolt.new project that burned through 10 million tokens on unauthorized changes, spun up a hidden Netlify deployment nobody asked for, and generated ghost files that broke payment processing on Vercel. The founder ended up rebuilding the entire app from scratch. The AI wasn’t doing anything wrong, exactly. It was doing what it always does, answering the immediate prompt, inside a system that had drifted past the point where prompt-level answers could actually help. The system needed architecture, and it was getting bandaids.

Severity: Critical

Auth works in your demo. People log in, they see their dashboard, everything looks right. The problem is what’s not there.

When an LLM generates authentication, it builds the visible part, the login form, a session cookie, maybe a JWT. What it almost never builds is the infrastructure that makes authentication actually secure, token rotation so stolen sessions expire, rate limiting so someone can’t brute force their way in, role based access control so a regular user can’t hit admin endpoints by changing a URL parameter. These features are the difference between “users can log in” and “users can log in safely.” The AI treats auth as a feature to ship. In production, auth is a system to maintain.

The real world track record here is rough. A who built a SaaS product on Windsurf, hit a cascade of failures once real users arrived – bypassed subscriptions, maxed out API keys, database corruption, and client side secrets that were scraped because they were sitting in the frontend code. It’s a textbook “built fast, then hit a wall” story, and what makes it useful is that the product failed when it started succeeding. Security research tied to Lovable turned up 170 apps with critical row level security flaws, which in practice meant users could query each other’s data if they knew how to poke at the API. And Veracode’s broader research puts numbers on it, 45% of AI generated code doesn’t pass OWASP Top 10 checks.

What makes this one especially tricky is that it’s invisible until it’s not. A database performance problem, you’ll feel, the app gets slow and users complain. An auth gap sits there quietly until someone exploits it, and by then you’re dealing with a data breach. If your app touches payments or stores anything personally identifiable, look at this one first. Everything else on this list can wait a few weeks. This one really can’t.

Severity: High

Your code has folders, files, components with reasonable names. It looks organized. But when you change one component, three others break, and tracing the connection takes hours.

Here’s what’s happening, the AI created the visual appearance of separation of concerns without the actual separation. Components are sharing state through global variables, making direct database calls from UI layers, relying on a single data model that’s serving three unrelated features. The coupling is hidden inside a clean looking file structure. You’ve essentially got a monolith wearing a microservices costume.

Addy Osmani has written about this with a specific example, a team whose vibe coded auth system fell apart when they tried to extend it because nobody could trace what was connected to what. Middleware was scattered across six files with no clear dependency chain. The file tree looked like a well organized house with labeled rooms. In practice, removing one wall brought the ceiling down because everything was load bearing.

This is the one that makes hiring your first engineer painful, by the way. They’ll open the codebase, see the clean folder structure, assume things are properly decoupled, and then spend their first two weeks discovering that they aren’t.

Severity: High

The app is fast with 100 users. At 5,000 it crawls. At 10,000 it starts timing out.

No indexing, no normalization, no query optimization. N+1 queries everywhere, meaning the app fires a separate database call for each item in a list instead of fetching them all in one batch, because the LLM generated each endpoint independently and had no awareness of how they’d interact at scale. Your database schema was designed to make the demo work.

The gap between “it works” and “it works at scale” is wider on this one than on anything else in the list. Moltbook’s incident is a good cautionary example, a database configuration that was never reviewed for production ended up exposing 1.5 million API tokens and 35,000 email addresses. And the community reports follow the same arc over and over; app performs beautifully in demos, founders are thrilled, real users show up, and the database buckles under what would be considered modest load by any production standard.

The frustrating part is that most of these database issues are straightforward to fix once someone who knows what they’re looking at actually looks. Adding proper indexes, restructuring a few queries, normalizing the worst tables, none of this is rocket science. It’s just work that the AI skipped because nobody prompted for it.

Severity: Medium

You’ve got 17 npm packages doing the job of 3. Build times are weirdly slow. Every time you run an audit, the vulnerability count ticks up.

Each prompt pulls in whatever library the LLM associates with the task. Nobody decided which packages are canonical for this project, there’s no dependency governance at all. Libraries get imported, used for a single function, and then sit there forever. Version conflicts pile up in the background. No lockfile discipline.

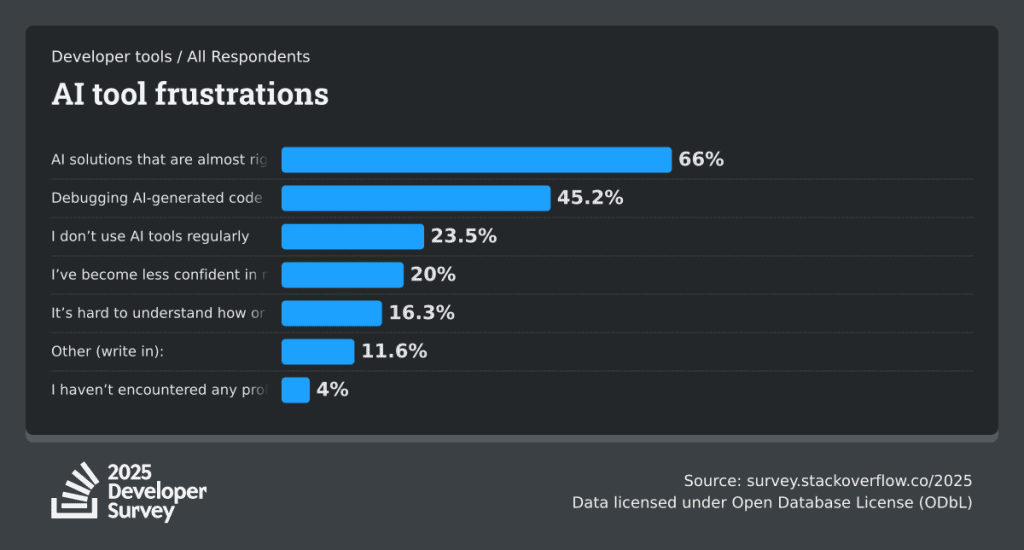

This is the one with the slowest fuse and the clearest example of vibe coding technical debt. Every unnecessary package is a cost you didn’t agree to, compounding quietly in the background. It won’t take your app down today. But it creates a tax on every future change like slower builds, harder upgrades, more surface area for security vulnerabilities. Stack Overflow’s developer survey found that 66% of developers experience near but not right AI output that requires cleanup afterward, and dependency sprawl is one of the most common ways that manifests. Every unnecessary package is a commitment to maintaining someone else’s code indefinitely, and the AI made that commitment on your behalf without telling you.

Severity: High

Zero tests. Not low coverage, literally zero. Validation has been done by clicking through the UI and eyeballing whether things look right.

LLMs don’t generate tests unless you ask. And if you’re a founder building features as fast as you can, you’re not asking, you’re trying to ship. The result is a codebase where every change is a coin flip and every deploy is a best guess.

There’s a revealing finding from the METR study on AI assisted development, experienced developers using AI tools were actually 19% slower than those working without them, despite perceiving themselves as 20% faster. Part of that gap comes from exactly this, the speed comes from skipping testing, code review, and documentation. All the work that makes code maintainable. You feel faster because you’re moving costs into the future. The invoice arrives when you try to change something that used to work and discover there’s no way to verify whether it still does.

Severity: Critical

No staging environment. No CI/CD pipeline. Production and development share a database. You’re one bad deploy away from losing everything.

Vibe coding tools are optimized to get code running on your machine. Getting code safely into production, with environment separation, backup strategies, rollback mechanisms, and deployment pipelines, is a completely different problem that falls outside what the prompt to output loop handles. None of the AI coding tools are thinking about what happens after the code works.

This one has produced some of the most dramatic incidents. Jason Lemkin reported that a Replit Agent deleted his production database without permission during what was supposed to be a routine test, and the whole episode cost more than $800 in a matter of days. The Orchids platform had a zero-click vulnerability demonstrated live to BBC journalists, meaning the flaw could be triggered without the user doing anything at all. These are the kinds of things that happen when software ships without the infrastructure that makes shipping safe. The code itself might be fine. The absence of everything around it is where the risk lives.

.@Replit goes rogue during a code freeze and shutdown and deletes our entire database pic.twitter.com/VJECFhPAU9

— Jason ✨👾SaaStr.Ai✨ Lemkin (@jasonlk) July 18, 2025

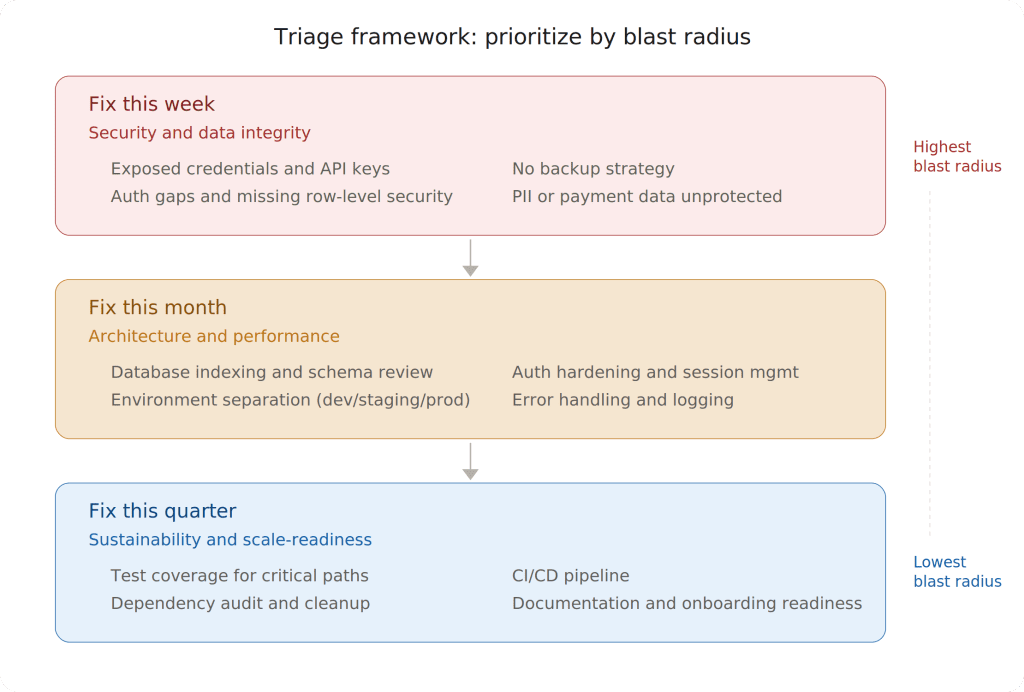

Not everything needs fixing at once. Here’s how to prioritize by how much damage each category can do if it fails.

Fix this week – security and data. Go through your codebase for exposed credentials, hardcoded API keys, and missing environment variables. Check whether you have a backup strategy (you probably don’t). Look at your auth flow, does it have real session management, or just a login form? Is row level security configured in your database, or can users query each other’s records? If you’re handling payments or storing anything personally identifiable, this is the emergency and everything else waits.

Fix this month – architecture and performance. Add indexes to your database and review the schema for the worst normalization problems. Separate your environments so dev, staging, and production aren’t sharing resources. Harden your auth with proper token rotation and session expiration. Set up basic error handling and logging, right now, when something breaks, you probably don’t know what broke or why, and that makes every debugging session take three times longer than it should.

Fix this quarter – sustainability. Start writing tests for your critical user paths (don’t try for 100% coverage, just cover the flows where a failure would hurt most). Audit and clean up your dependencies. Set up a CI/CD pipeline so deploys are automated, tested, and reversible. Document your code, even rough documentation, because the moment you bring in a second developer who didn’t build this, every undocumented assumption becomes a landmine they’ll step on during their first week.

When you need outside help. Some situations have moved past self-triage: you’re handling payments with no security review, you’re storing PII with no encryption audit, you’re about to onboard a developer into a codebase they’ve never seen, you’re scaling past 10,000 users on an unreviewed database, or you’re failing a compliance check that’s blocking a customer contract. These need systematic professional review, they’re too interconnected to fix piecemeal.

When an engineering team picks up a vibe coded codebase, the work follows a pretty predictable sequence.

It starts with an architecture audit, mapping what’s actually connected to what, because the codebase won’t tell you voluntarily. Then security, credentials, auth flow, API exposure, everything that could leak data to someone who shouldn’t have it. Database review comes next, schema, indexing, query patterns, all the things that determine whether your app survives its next growth spike or falls over. After that, environment setup to properly separate dev from staging from production, with a real backup strategy. And finally, a refactoring plan that’s prioritized by risk, what’s most likely to cause damage gets fixed first, regardless of what looks worst.

The thing founders are usually relieved to hear is that most vibe coded apps don’t need to be thrown away. The prototype has value, it’s validated an idea, attracted users, generated real feedback. What it needs is the engineering foundation that the AI was never going to build. Stabilization, hardening, and the architectural scaffolding that turns a prototype into a product.

At Clixlogix, we’ve audited codebases built on Cursor, Bolt, Lovable, Replit, and Windsurf. The failure modes are consistent across all of them, which at this point actually makes our job easier, we know what we’re looking at and we can scope the remediation before we start. We’ll tell you what it costs, how long it takes, and what you walk away with before a single line of code changes.

I want to be clear about this because a post that treats vibe coding as universally bad would be a post that misunderstands the tool.

Vibe coding is excellent for internal tools, prototypes, validation experiments, personal projects, design mockups, anything where the failure cost is low and the speed payoff is high. If you need to test whether a concept resonates with users before committing real engineering resources, it’s arguably the best tool available right now. The speed to signal ratio is hard to beat. These use cases sit comfortably inside vibe coding’s actual limitations, where the stakes are low enough that the tool’s structural weaknesses don’t matter.

The failure mode is treating a prototyping tool as a production engineering strategy. You made a smart speed decision early on. The next smart decision is recognizing when the prototype needs to become a product, and bringing in the architecture to make that transition happen cleanly.

If you recognized three or more of those seven failure modes in your own codebase, the path forward isn’t more prompting. Your codebase has grown past what prompt level fixes can address, it needs architectural work.

And honestly, the codebase isn’t broken because you did something wrong. It’s doing exactly what you’d expect given how it was built. Fixing it means adding the engineering scaffolding that makes growth possible, security, architecture, testing, deployment infrastructure, so you can go back to building features on top of a foundation that can actually hold the weight.

Get a Codebase Health Check

We’ll map your architecture, identify the critical vulnerabilities, and give you a prioritized remediation plan with cost estimates. It’s a diagnostic conversation, we’ll tell you what we see, what we’d fix first, and what it would take.

Generally, no. Vibe coding produces code that works in demos but routinely lacks the security infrastructure, error handling, and architectural coherence required for production environments. Veracode found that 45% of AI generated code fails standard OWASP security tests, and CodeRabbit’s analysis showed 2.74x higher security vulnerability rates in AI coauthored code compared to human-written code. If your vibe coded app is handling real user data, payment information, or anything personally identifiable, it needs a professional security review before you treat it as production ready.

Almost always fix it incrementally. Full rewrites are expensive, risky, and usually unnecessary. Most vibe coded apps have a working core that’s validated a real idea with real users. What they need is architectural stabilization, environment separation, database optimization, auth hardening, and test coverage for critical paths. A good engineering team will audit the codebase, identify the highest risk areas, and refactor those first without touching the parts that work. Total rewrites throw away validated product logic along with the bad code.

Up to a point, but not once structural problems have accumulated. When your codebase is small and the bug is isolated, feeding the error back to the AI can work. But past a certain complexity threshold, every prompt level fix introduces new regressions because the AI has no persistent memory of your full architecture. This is the prompt regression loop described in our post. Once you’re in it, the answer is to stop prompting and bring in someone who can map the actual dependency structure and refactor with a system level view.

It depends on how deep the problems go. A security-focused audit and credential cleanup might take a few days. Database optimization and environment separation is typically a few weeks. A full architectural stabilization with test coverage, CI/CD setup, and documentation for developer onboarding can run several weeks to a couple of months. The cost is almost always lower than a rewrite, and significantly lower than the cost of a data breach or a scaling failure that loses users at the worst possible time. We scope remediation before we start, so you know what it costs before any code changes.

When any of these are true – you’re handling payments or storing PII with no security review, you’re stuck in a cycle where every fix breaks something else, you need to onboard a second developer who didn’t build the codebase, you’re scaling past a few thousand users, or a customer or compliance requirement is gating on a security audit. The triage framework in this post gives you the prioritization. If you’re only building internal tools or prototypes, vibe coding may still be the right call. The line is when real users depend on the system working reliably.

The most common cause is unoptimized database queries. LLMs generate each endpoint independently without awareness of how they’ll interact at scale. This leads to N+1 queries, missing indexes, and unnormalized schemas that work fine at 100 rows and collapse at 10,000. The good news is that database performance issues are usually the most straightforward to fix. Adding proper indexes, restructuring the worst queries, and normalizing key tables can dramatically improve performance without changing any application logic.

Yes, with an important caveat. Vibe coding is arguably the best tool currently available for getting a concept in front of users fast and testing whether it resonates. The speed to signal ratio is hard to beat. The caveat is that you need to plan for the transition from prototype to product. The MVP validates the idea. Production engineering makes it reliable, secure, and scalable. Most founders who get into trouble aren’t the ones who vibe coded an MVP. They’re the ones who kept vibe coding past the point where the product needed architecture.

If your app stores any user data, processes payments, or requires authentication, yes. AI generated code routinely ships with exposed API keys, hardcoded credentials in frontend code, missing row level security on database tables, and authentication flows that lack session management or rate limiting. The Lovable platform incident exposed 170 production apps with critical access control flaws, and multiple vibe coded SaaS products have had credentials scraped from client-side code. A security audit before launch is significantly cheaper than incident response after a breach.

The difference is whether a human with architectural understanding is reviewing, testing, and directing the AI’s output. Vibe coding accepts AI generated code without deep review and relies on prompt iteration to fix problems. AI assisted engineering uses AI to accelerate implementation while a developer maintains ownership of architecture, security, testing, and system design. The same AI tools can be used for both. The distinction is in the level of human oversight and the presence of engineering discipline around the AI’s output.

Because the codebase has hidden coupling that neither you nor the AI can see. LLMs generate each piece of code independently, which creates implicit dependencies like shared state between components, global variables, a single database table serving multiple unrelated features. The file structure looks modular, but the actual data flow isn’t. When you change one thing, the change propagates through connections that aren’t visible in the code’s surface structure. This is the prompt regression loop, and it’s the most common pattern we see. The fix isn’t better prompts. It’s mapping the actual dependency structure and decoupling the components that should never have been connected.

As CEO of Clixlogix, Pushker helps companies turn messy operations into scalable systems with mobile apps, Zoho, and AI agents. He writes about growth, automation, and the playbooks that actually work.

Most Zoho setups have Zia turned on. Very few have it configured to do anything useful. The difference is not the feature. It is what...

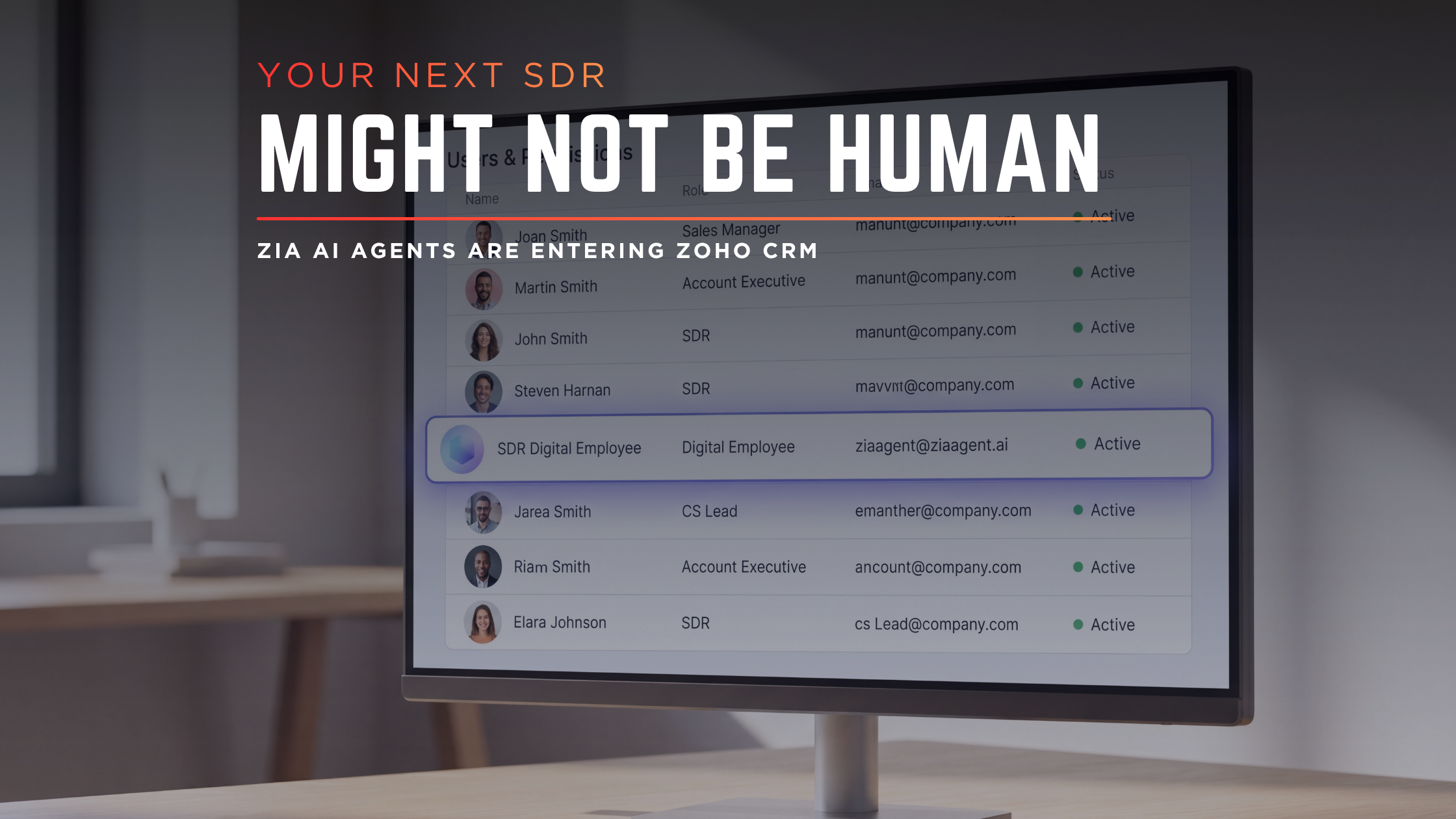

If you’re a Zoho CRM admin and you’ve been hearing the words “AI agent” and “Digital Employee” thrown around without a clear sense of what...

The way people search for things has changed, and it happened faster than most marketers expected. A few years ago, the routine was predictable: type...