Any website with a confused URL structure is doomed to lose on the SEO front. If you or someone you know has been struggling with their traffic perhaps it’s time to have your website URL’s audited.

A good URL structure ensures that your website looks like a well-arranged folder of multiple web documents to Search Engine Spiders. Which in turn award websites with better authority than the competitors who are either uninitiated or careless about their URL structure.

Along with URL structure Google looks at various things Search Engine Signals when it indexes your website i.e backlinks, meta tags, content, domain age, page load speed, alt tags and many more onpage elements. By Leveraging SEO-Friendly URL Structure you not only rank higher in the SERPs but also offer a much better & memorable user experience, if URLs are done well.

So first we’ll take look at the SEO Best Practices for Structuring URLs on your website and then explore a few Content Management System that provide you the functionality of customizing URLs by default:

11 Best SEO Practices for Structuring URLs

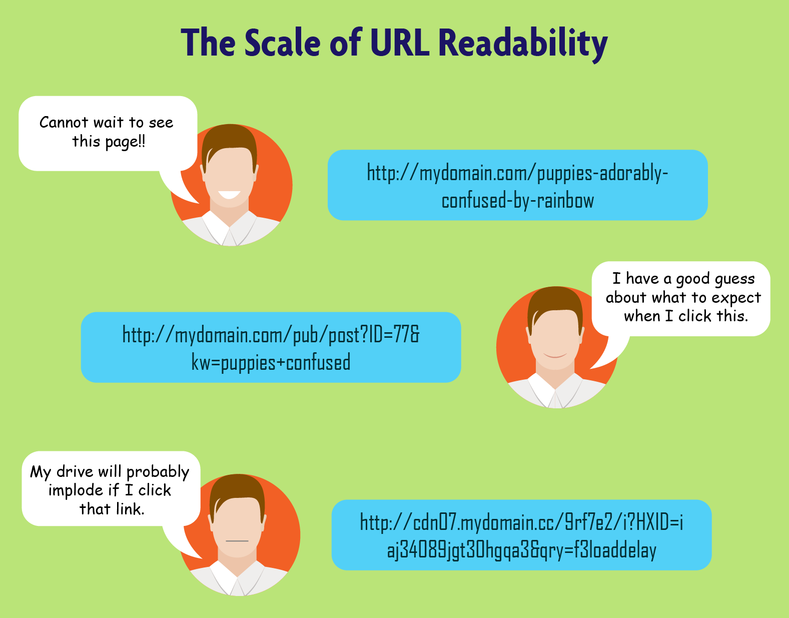

#1. Accessibility: The more the URL is readable by human, the better.

Accessibility has always been an important part of SEO. Keeping the URL straightforward and meaningful is always helpful. This way human mind if need be can memorize the URL. Take a look at the following example:

#2. Subdomains vs Subdirectories: With recent changes, the way Google looks at subdirectories and subdomains has become different. It is no more a clear choice between them as it was earlier. As now links pointing to the subdomain still pass some credit to the root domain but it’s always best to go with the subfolders. It’s hard to argue here since there is already enough evidence to prove this that people who moved their content from a “sub.domain” to a “sub/folder” instantly saw rise in rankings and traffic. All the links you generate on the subfolder will eventually help the root domain but with sub domains you only get a portion of the total value.

But can subdomains hurt your SEO?

Sub-domains are a common tactic for lots of brands, they may have different sites for various countries for example, or have variations on the main brand offering. Here are some point to keep in mind:

- Subdomains MAY inherit some or all of the ranking benefits of the root domain they’re on, but it’s inconsistent and hard to know for certain. In cases like .org or blogspot.com, a single subdomain/site is almost certainly not inheriting these ranking properties while on subdomains like blog.mydomain.com, they will much of the time (though not always).

- PageRank (at least, in the classic, Google ranking formula sense as well as the green pixels in the toolbar) will not be passed from a domain to a subdomain – it’s passed only via links and applies to pages (not domains or subdomains as a whole). That said, the concept of a domain’s importance on the domain-level link graph, could indeed be passed in some cases and not others – hard to know.

So, the myth “that having subdomains will not necessarily hurt your rankings” – can be done away with. There’s situations where sub-domains can work well, and other situations where you can be cannibalizing keyword / site authority.

#3. Strategy for Multilingual websites with URL structures: A multilingual website is any website that offers content in more than one language. We did a long post earlier about how to optimize SEO URLs for multilingual website

Here are a few strategies to be implemented when managing a website with different versions –

- Make sure each language version is easily discoverable: Always keep the content for each version on separate URLs. Avoid use of cookies for showing the translated versions of the page. Consider providing a button for convenience of the user where it can be easily spotted and therefore enhances user experience.

- Choose the URL carefully: Choose a URL nomenclature for different versions of the website very carefully. URL should itself provide human users with enough clues about the page’s language. Here’s a good example:

www.example.com/fr/velo-de-montagne or fr.example.ca/Velo-de-montagne

This URL clearly informs the user of the page being in French.

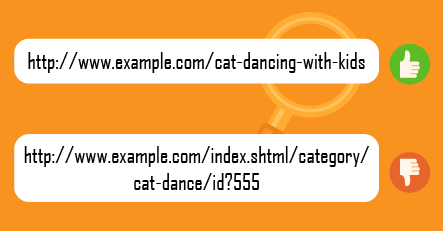

#4. Describe Your Content: If a user just by a look at the link can get an idea about the content of the page even before visiting it, you have done your job. This way it’s easier while sharing the URL on social media, blogs, forums and elsewhere they resent themselves and give users an approximate guess about the content of the page.

Here’s a good example:

#5. Use keywords in URL, because they never hurt: Yes use of keywords in URL are still important. They are certainly one of the key elements of a successful SEO strategy. Although they don’t have the same weightage like they had five years ago but still even today search engine crawlers start evaluating a web page by its URL and having your keyword placed in the URL therefore can boost your position in the SERPs. It is important to remember that overdoing this optimization can also result in a page level penalty so repeating a keyword in URL is never a good idea.

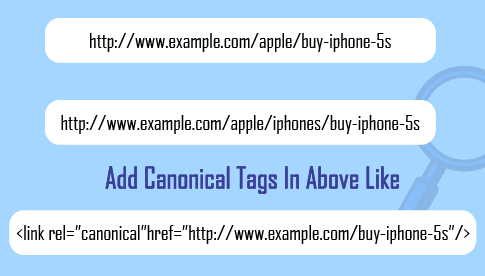

#6. Multiple URLs Serving the same content? Canonicalize them: Serving two pages with exactly same content creates the issue of content duplicacy and google deals with it very strictly. If there is no real reason for maintaining the two pages you might as well redirect secondary copy to the primary one using 301 redirect and be done with it but if there is a well enough reason then use the canonical tag. The page will stand a better chance to rank and earn traffic.

#7. Dynamic Parameters is No – No:

Avoid ugly looking URLs like:

Dynamic parameters should be avoided and consider rewriting them as static and readable text. Static Is the way & the Light. Google in fact mentioned something about this in their official webmaster blog:

“We’ve come across many webmasters who, like our friend, believed that static or static-looking URLs were an advantage for indexing and ranking their sites. This is based on the presumption that search engines have issues with crawling and analyzing URLs that include session IDs or source trackers. However, as a matter of fact, we at Google have made some progress in both areas. While static URLs might have a slight advantage in terms of clickthrough rates because users can easily read the URLs, the decision to use database-driven websites does not imply a significant disadvantage in terms of indexing and ranking. Providing search engines with dynamic URLs should be favored over hiding parameters to make them look static.”

#8. Stop words are there to go: If the title of your webpage includes stop words such as The And, But, Of, The, A, Etc, It’s preferred if they are dealt with and removed from the URLs. The best practice to be followed here is the minimum number of words that seem to give a user an accurate guess of what to expect on this page.

www.example.com/a-cat-and-dog-of-a-neighbour

This can be stripped down to

www.example.com/cat-dog-neighbour

Short and sweet.

#9. Redirection loops: If a user or crawler visits URL A and it redirects URL B, it’s cool but then it redirects to URL C, you are in for trouble. It is always advisable to limit your redirect looping to two or fewer.

Search engines will follow eventually these redirects but they might see them as less important URL and all the ranking signals might not be counted.

#10. Be wary of case sensitivity: Since URLs can accept both uppercase and lowercase characters, don’t ever, ever allow any uppercase letters in your structure. Even today many websites have not addressed this problem. Earlier it used to be a time consuming task but now there many tools that can take of this for as they automatically canonicalize or redirect the URLs. THE BEST PRACTICE would be to redirect all of them using 301 Redirect to the all-lowercase letters version to avoid any confusion.

#11. Shorter URL’s: Shorter URLs are preferable, the best practice would be If your present URL is within 60 characters. Although if you URLs pushing for 100 characters it’s better to devote some time in rewriting them all. The words that directly relate to the meaning and purpose of your page are the best choice. Think about an eCommerce site that sells apparels. Typically, you would like to create three sections, Men’s, Women’s, and Children, and then create the entire URL tree, following a top to bottom hierarchical approach. Hierarchy of the website should be designed in a way that the homepage of the website is never further than 2 clicks.

Google looks at many things when they decide where a website ranks for certain keywords, and one of those is the actual URL of the webpage itself.

Content Management Systems That Make SEF URLs Easy

Choice of a CMS is one of the most important aspect that decides whether sorting of your Search Engine Friendly URLs will be a simple task or a tough nut to crack. Here is a list of Content management Systems (CMS) that provide you the flexibility of optimizing URLs to make them clean, descriptive and hierarchically organized by default keeping in mind that there are a lot of other CMS that provide you this functionality but these are the most popular, most secure and most robust CMS out there.

#1. WordPress: WordPress was initially developed to build blogs, nowadays used as a content management system for almost all the website. A large number of media managers choose WordPress as a solution when setting up a website due to perceived advantages of the platform.

Major Features:

1. Custom URL, clean permalink structure, excellent for SEO.

2. Users can re-arrange widgets without editing PHP or HTML code.

3. Rich plugin architecture which allows users and developers to extend its functionality beyond the features that come as part of the base install.

#2. Joomla: Joomla is the result of a fork of Mambo CMS on August 17, 2005. Within its first year of release, Joomla had been downloaded 2.5 million times.

Major Features:

1. You can manage multiple site with Joomla in multiple languages natively (since Joomla 1.6). You can use it for blogging site, corporate site, personal site, gallery, briefly, whatever you imagine.

2. Provides moderate descriptive URLs

3. Provides Multiple-level menu and content category system, template customization, advanced search, RSS feed aggregator.

#3. Drupal: Though its share is shrinking – 1% of all websites on the Internet are based on this platform. Drupal started out way back in 2001 and perhaps in one of the oldest CMS out there.

Major Features:

1. You can use it for blogging site, corporate site, personal site, gallery, briefly, whatever you imagine.

2. To increase its performance, you can use caching. At the same time it provides high security with notifications about the new update releases.

3. Provides Search Engine Friendly descriptive URLs.

#4. Magneto: An excellent software, beyond making it possible to customize the page design according to your expectations, should also offer the options of configure your online “shop window”, search features as well as all the processes in detail.

Major Features:

1. Within Magento you have the option to add URL Rewrites to make your webpage links more readable to both search engines as visitors.

2. The system has a remarkably rich administrative area, which gives you the possibility to modify everything according to your needs – such as product features and categories or content targeted at different customer groups. Magento is capable of handling many things, it is a robust system but customizing htaccess to achieve highly custom URL structure does require an expert at the job.

Conclusion:

URL structuring is a very important element of any website and perhaps the most fundamental building block of SEO. You’re good to go. Best of luck with all your URL creation and optimization efforts! Please feel free to leave any suggestion, ideas in the comments below.

award websites with b